“Tools?" scoffed Kalisti, "Tools are for people who have nothing better to do than think things through and make sensible plans.”

― Laini Taylor, Muse of Nightmares

When we left off in Part 1 of my CMIYC2023 Writeup, I had cracked a measly 437 passwords. Yes I had a Jupyter Notebook set up to perform analysis, but what I really needed was more cracked passwords to do analysis on. To that end, I started off doing some basic exploratory attacks very similar to what I detailed in previous competitions [Crack the Con, CMIYC2022].

These included running JtR Single Mode with the RockYou, dic-0294, Alter_Hacker, and the sraveau-Wikipedia wordlists. Basically these attacks are about as dumb and untargeted as you can get. But they are also easy and quick to run against fast hash types. And they can be helpful! The Wikipedia wordlist in particular highlighted that Cyrillic passwords would likely play a role in this competition.

Running an attack using a Russian wordlist (from here) didn't yield many additional cracks though. Doing some Googling, it looks like some of these are Ukrainian words, not Russian words so I tried this dictionary as well [link]. One thing though is I was able to see all the usernames are also Cyrillic. This will probably be useful to target tougher hashes.

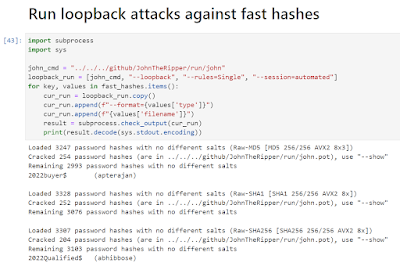

Around this time, I also created a second Notebook in JupyterLabs to automate some of the common tasks that I am always doing. For example, running loopback attacks against fast hashes using previously cracked passwords is a great source of new cracks. So that's a good task to automate since I'm constantly doing that in the background.

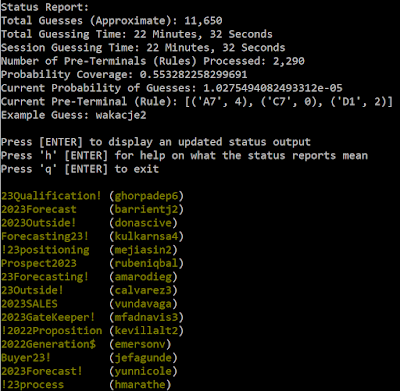

I also created cells for similar activities that I'm constantly doing such as creating a single list of all the plaintext cracked passwords I can use for training. This in turn allows me to quickly create a PCFG training set on the full list for cracking fast and medium-speed hashes. Aka:

- python3 trainer.py -r CMIYC2023 -t ../../research/cmiyc/2023/all_plains.txt

- python3 ../../../github/pcfg_cracker/pcfg_guesser.py -r CMIYC2023 | ../../../github/JohnTheRipper/run/john --format=raw-sha256 -stdin raw_sha256_hashlist.txt

This also netted me my first two bcrypt cracks

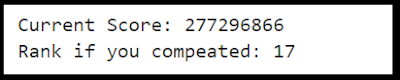

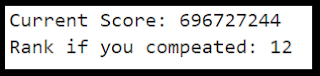

Since I'm doing this after the contest and can't submit cracks. I also created a quick "scoreboard" in Jupyter to estimate how I'd stack up to the other street teams:

I want to stress again. I'm cheating. These teams actually competed in the competition. I'm leisurely sitting down for short cracking sessions while writing this blog post. Which is another way of saying my numbers are even worse than they appear ;p

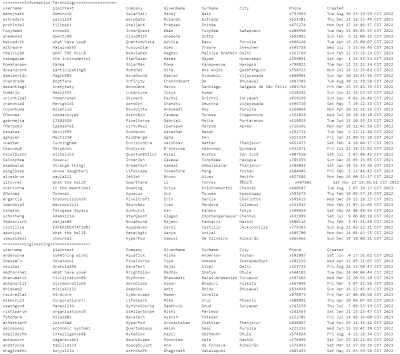

Well, I need to do something about that! Next step is to look through my updated meta/cracked list and try to spot patterns:

I now have cracks for every department, but it doesn't seem like the individual departments follow a set pattern. There's a couple of different ways to go from here. The first thing to do is create some custom rules to match patterns that I'm seeing in the plaintexts.

Running the rules above and several others yielded a few more cracks. The other thing I did was create some custom PRINCE wordlists from the cracked passwords using PRINCE-LING from the pcfg toolset.

I created both a full wordlist (as seen above) and also wordlists containing only 500 values for targeting slower hash-types. Using Prince attacks then identified a few more rules that yielded additional cracks.

Another area seemed to be to target non-ascii usernames with non-ascii guesses.

Side note: If you ever have to identify non-ASCII characters using Python the following check is highly effective as it strips non-ASCII characters and then sees if the word shrunk:

- if len(key.encode("ascii", "ignore")) < len (key):

In addition, I later added in the GivenName and SurName fields from the metadata (not depicted above) which helped a lot too.

As we're continuing to throw things against the wall, let's try and build more wordlists from all the metadata. Many of the companies seem to be two words concatenated together. Let's strip them out and break them up.

And ... using dictionaries based on the company metadata was totally unhelpful. I did not get a single additional crack using those dictionaries.

After all of that, let's check where I'm at with my score:

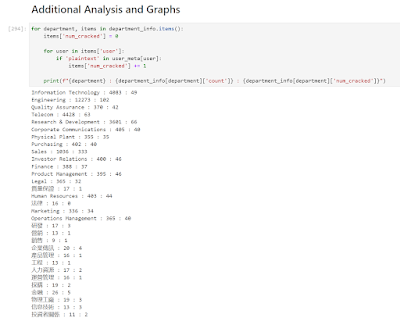

I really need to switch things up because that's not even close to respectable. Looking through the list of cracked passwords no new patterns stood out, but then I decided to map out my progress targeting each department in my Jupyter Notebook.

OMG. This hadn't been apparent as I was looking through the previous view of cracked hashes since I forgot how many more IT hashes there were than any other group, but what I had been doing is basically cracking Sales passwords. I had that pattern down pat, and my PCFG cracker was trained on mostly Sales passwords. How about I run a PCFG attack, trained on CMIYC2023 plains against Bcrypt Sales-only hashes? To create the hashlist using Jupyter was easy:

I then ran a pcfg attack against the sales-only bcrypt hashes using the following command:

- python3 pcfg_guesser.py -r CMIYC2023 --skip_brute | john --stdin --format=bcrypt sales_hashes.txt

That was super effective!

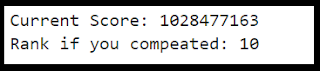

I want to stress, this was all using John the Ripper on a single laptop. And these are BCrypt hashes I'm targeting. They are super slow and annoying even with better cracking rigs! After about an hour of cracking time my score had significantly increased.

I then started running the same attack against other hash types such as sha256crypt and had similar success.

Next I realized I needed to build up my dictionary. To do this I took a common prefix (2023), added a '!' to the end, and used JtR's Mask mode to exhaust alpha characters for the remaining letters, capitalizing/lowercasing the first letter and having the rest lowercase

- john --mask=2023[a-Z]?l?l?l?l?l?l! --format=raw-md5 raw_md5_hashlist.txt

I did several variations of the above and found a few new base words but not many. I then re-ran the PCFG trainer to update my sales ruleset and then re-ran my attacks to net a few more hashes cracked. Still, there was certainly room for improvement and I was semi-happy with my wordlist so the next thing to do was look for new mangling rules. To that end I re-ran JtR's mask attacks with a known plaintext word "Sales". For example:

- john --mask=?a[Ss]ales?a?a?a?a --format=raw-md5 raw_md5_hashlist.txt

This didn't yield more cracks so I was very kerfluffled about that. There's obviously a good chunk of passwords I'm still missing in this group. Still by running my PCFG attack again (retrained with the newly found base words) against the "Sales Only" tougher hashes had a big impact on my score.

I know these calculated scores have real "if the game lasted one more round I would have won" little brother energy. I have a ton of respect for everyone who did compete in CMIYC and want to stress if I was doing this live, in Vegas, with a million other things going on I would have done much, much worse. But it's helpful to know that without solving any of the "real" challenges in the contest there was still ways make progress with limited cracking resources.

Conclusion:

I think this is a good place to wrap up this blog post. All these attacks were run on my laptop with John the Ripper, and I think it shows a base level of ability that anyone can do. I also hope this highlighted the value of using Jupyter Notebooks, not just for password cracking, but in any data analysis task you might find yourself doing.

I'd specifically like to thank the KoreLogic team for running yet another great contest! I had a ton of fun digging into it and even more fun talking to everyone at their booth at Defcon! These contests require a ton of work to set up and they help the community so much so I really appreciate all the work their team puts into this.

One thing I'd like you to walk away from these entries is how useful a tool JupyterLab can be for all your data analysis tasks. It was a long time before I started using it myself, and I'm constantly surprised by how easily it integrates into my workflows and how much more productive it makes me. I highly recommend checking it out, even if you aren't cracking passwords.

I have a lot of ideas of where to go next. I'm tempted to write a follow-up blog entry that goes full spoiler into this contest, looks at how the plains were generated and then uses Hashcat on a more powerful machine to show how to target them (such as using Hashcat's amazing -a 9 association mode). I also have some improvements I need to make to my PCFG toolset based on feedback from other people using it during this contest. Finally I want to clean up my Jupyter notebook that I created for these posts and make it available on Github. When I finally get around to that I'll post a link on this blog entry. These were fun entries to write, and this was a great contest to (belatedly) participate in. Thanks again to KoreLogic and congratulations to all the teams that participated.

Comments